Indicate expected outcome for the specific probe execution. This is used to decide if a test has successfully passed or failed.

Unlike .rtcSetTestExpectation(), this script command enables you to create your own calculation as an asset. Your function will be called at the end of the test run, letting you decide what to do with the metrics collected and determine if the test should pass or fail.

If you want to create a custom expectation based on events in the session then use .rtcSetCustomEventsExpectation(). You can also check other assertions and expectation commands available in testRTC.

Arguments

| Name | Type | Description |

| asset-name | string | The name of the asset holding the expectation calculation |

Asset

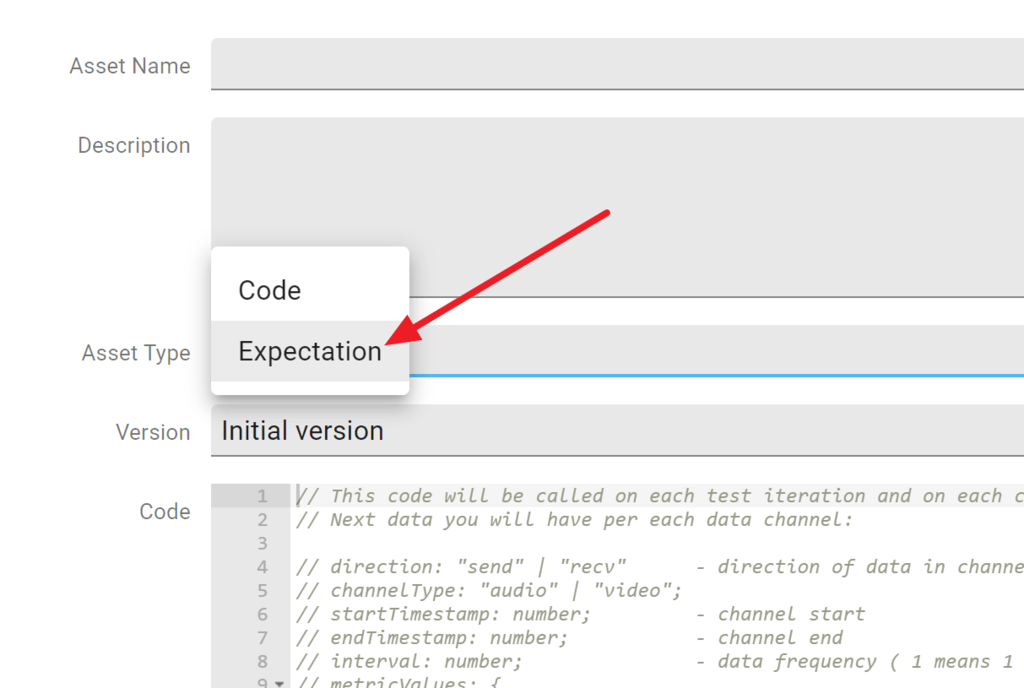

To create a custom expectation, you first need to add a special asset to your project:

Once added, you can modify the expectation asset’s code with the calculation you’d like to do over the data.

The asset will be called multiple times – once for each channel that got collected for this probe:

- To decide which channel you are looking for, you can look at the local variables direction and channelType

- You can also check the startTimestamp, endTimestamp and interval between the metric values. The interval is given in seconds

- metricValues store the actual statistics collected. You have access to bits, packets, loss and jitter

- Your final verdict should be placed in the result variable

Example

// This code will be called on each test iteration and on each channel.

// Next data you will have per each data channel:

// direction: "send" | "recv" - direction of data in channel

// channelType: "audio" | "video";

// startTimestamp: number; - channel start

// endTimestamp: number; - channel end

// interval: number; - data frequency ( 1 means 1 value/sec )

// metricValues: {

// bits: number[];

// packets: number[];

// loss: number[];

// jitter: number[];

// };

// keep this

const result = {

passed: true,

errMessage: ""

};

// example calculations

const array = metricValues.loss;

const avgLoss = array.reduce((total, value) => total + value, 0) / array.length;

if (avgLoss > 1) {

result.passed = false;

result.errMessage = "Data loss is too big!";

}

// keep this

result; Code language: JavaScript (javascript)The code above will place an error on each channel where the average packet loss value is bigger than 1.